In museums, objects are often exhibited separately. Tools are shown alongside other tools, and glass objects are exhibited together with other glass objects. How do you tell a coherent and captivating story, connecting the dots between different exhibited objects? In co-creation with CNAM (Conservatoire national des arts et métiers), Waag has been prototyping a digital experience for Mingei’s pilot on glass. Developer Lodewijk Loos takes you along the journey towards the first prototype.

Visiting CNAM

The goal of creating this digital experience at CNAM is to engage visitors and give them insight into the process of glass making. The prototype should work on site (in this case in the context of the museum), and should add to the already available real objects on display. However, the technology used for the prototype should be non-obtrusive to the local situation. Visitors who do not wish to use the technology, should not be bothered by it.

In order to get a grasp of the local context at CNAM, Meia Wippoo and Lodewijk Loos of Waag went to Paris in March 2020. There, we had a fruitful co-creation session with a team of museum professionals from CNAM and conceptualized a rough version of the prototype. In the glass section of the museum, we made some observations that were key to the first version of the prototype.

First of all, the glass objects are exposed in vitrines, they couldn’t be touched or picked up and could not be looked at from all angles. Of course, not being able to pick up objects in a museum is normal. However, as a lot of these objects are tools and utensils, being able to do so would contribute to the understanding of the object. We also know from experience in earlier projects, like meSch, that being able to pick up objects leads to more user engagement.

The next thing we noticed is that some objects were related to other objects that were displayed in different rooms of the museum. For example, the glass tools and a glass product were not in the same room. The reason for this is that there are different ways to classify object. The tools were in the tooling section and the carafe was in a section with artworks. However, these objects are part of the same story that we would like to tell: the process of glass making.

Another observation that we made was that some of the objects key to the story were not on display in the museum, for example a furnace and piece of wet paper were not there.

Augmented reality

As we decided upfront, the prototype should help to get insight in the process of glass making. With these observations, we could translate the story of glass in a more generic story. One could say that in the context of crafts, a general pattern is that objects are used with other objects (for example tools with materials), in different parts of the process. That is what we want our digital experience to give insight in.

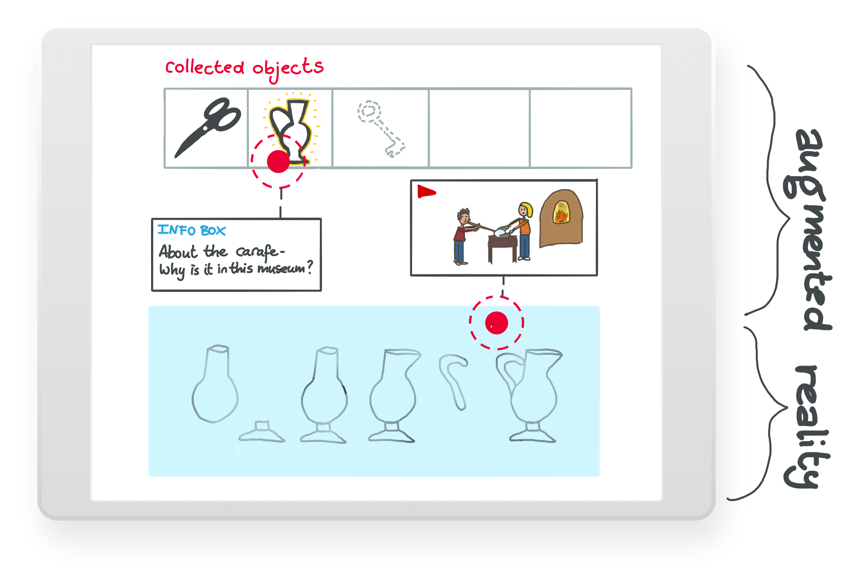

We also concluded that the use of augmented reality (AR) technology could be of value for this prototype. With AR, it is possible to create the sense of picking up (virtual representatives of) objects, use them in another room, and show objects that are not physically there.

Mark the process

We returned back home and worked out several concepts. Next, we aggregated common interaction principles from our concepts. Our interaction principles showed similarities to (adventure) games. Adventure games are like a puzzle: you often have to pick up objects, sometimes not yet knowing what for, and use them at another location, sometimes in combination with another object.

One of our concepts focused at the carafe, named “Mark the Process”. This concept would lend itself for this type of adventure-like (mini) game. The central piece in this game would be the various parts and stages of completion of the carafe. This is how the process of making this type of carafe is currently displayed in CNAM. Wouldn’t it be nice if you had pick up the tools associated with this process in the one room, and place them at the right “step” in the other room? We also liked the idea of being able to collect museum objects and take them home for closer inspection.

The use of markers

With this concept in mind, we started implementing a proof of concept as a smartphone app. From previous AR projects, we had experience with the combination of Vuforia (AR framework) and Unity3D (gaming engine). The former is very well integrated in the latter, making it an ideal tool for (at least) prototyping. Vuforia support various ways of augmentation, both marker-based as marker less.

Markers are physical signs that are recognized by the app to instigate interaction. Using markers makes the app less dependent on local lighting conditions, which were not ideal or constant at CNAM. Recognising a marker, instead of an object itself, generally just works better. Additionally, using markers could make it easier for users of our app to see at which locations in the museum they could interact, because they serve as a visual clue. When you’re in a museum with thousands of objects, it is convenient that you can see immediately (without using a device) which ones are interactable. Finally, markers are easier in use. Augmenting an object by placing a marker in front of it is less challenging then having to scan the object and markers make it also easy to place objects in the void. In the longer run, the use of markers helps to accomplish a more generic application for different venues with different content, that allows its content to be authored by curators (as opposed to software developers).

Living room demonstration

Our original intention was to test the prototype at CNAM with random visitors of the museum. But during the development of the app, Covid-19 came around and it became clear that testing the app in a public venue with a real audience would not be possible anytime soon. Furthermore, the Covid-19 situation might even change the way we design things permanently. For example, it might have become undesirable to have devices in a museum that are handed out to visitors or to have installations with touch screens. An AR app that people can run on their own phone should be relative safe and convenient.

With this in mind, we slightly changed our prototyping strategy and made the decision to create a living room demonstration. Originally, the prototype was meant to include virtual copies of the museum objects. By the lack of museum objects in the developer’s house, we used general building tools and convincing 3D models from online repositories.

The prototype demonstrates a few of the principles. The user can pick up object and place them back again, objects can be collected in a treasure chest for later use, objects can be used with other objects by using them with a marker next to that other object, referenced media for the collected object is available as background information, information overlays (giving hints) can be shown and a collection of objects can be used to make simple puzzles. As a gamification element, the user receives badges after completing specific tasks or reaching certain goals.

Next steps

This simple approach allows for a lot flexibility to create puzzle-like games. For example, a timeline game could be created by changing the physical placement of the markers into another linear layout. One could also imagine having different kinds of visual markers for different kind of interactions. One type of marker could indicate that an object can be picked up, and another marker could indicate that an object can be used at that spot.

At this point it is also interesting to think about how these principles can be applied at the other pilot locations. Part of the Mingei project is a pilot in Chios (Greece) on the craft of harvesting and processing Mastic from the mastic tree. Would it be feasible to apply the prototype at the local situation over there by augmenting the Mastic tools and placing markers on and around a real tree? There is still enough work to be done and questions to be answered towards a generic AR application for on-site craft experiences!