Mingei aims to preserve traditional crafts, spread knowledge about them and inspire people to learn new crafts. The project is inventing new approaches and learning methods, and one of these methods is movement sonification. How can the movement sonification method be used as leverage to the Mingei’s project objectives, by digitising, reproducing and conveying rare know-how? In this article, we will explain what movement sonification is, and why it has been chosen as a method for learning the gestures of the glassblowing handicraft, one of Mingei’s use cases.

About movement sonification

Sonification is defined as the use of non-speech audio to convey information (Kramer 2016, 185–221), and the technique of rendering sound in response to data and interactions (Hermann et al. 2011, 1).

Bevilacqua et al. (2016, 385) describe the benefits of movement sonification in their paper as follows: “Movement sonification is considered the auditory feedback that is being created in relation to a movement and/or gesture performed. The idea of using auditory feedback in interactive systems has recently gained momentum in different research fields. In applications such as movement rehabilitation, sport training or product design, the use of auditory feedback can complement visual feedback. It reacts faster than the visual system and can continuously be delivered without constraining the movements.”

As such, sonification is currently used in a wide variety of fields, such as “driving a car or riding a bike blind, directly finding one’s way in an unfamiliar smoky environment or being able to improve the quality of one’s gestures in real-time” (Parseihian et al. 2016, 1).

In particular, movement sonification systems appear promising for educating complex techniques and skills in providing users with auditory feedback of their own movements. The purpose is guidance for improving movement performance during a learning process and the application can vary.

So, why researchers did put sound on purpose? Hermann et al. (2011, 3) describe that “the motivation to use sound to understand the world (…) comes from many different perspectives (…). Thus, the benefits of using the auditory system as a primary interface for data transmission are derived from its complexity, power, and flexibility.” In other words, the reason of distinguishing sound as relevant to communicate information is found in its inherent feature to have a resonance to one listener and diversity in the way it would be employed.

Considering that movement sonification can guide a user to reproduce an expert gesture, this method is proved suitable while focusing on enhancement of the learning experience and improving the movement performance.

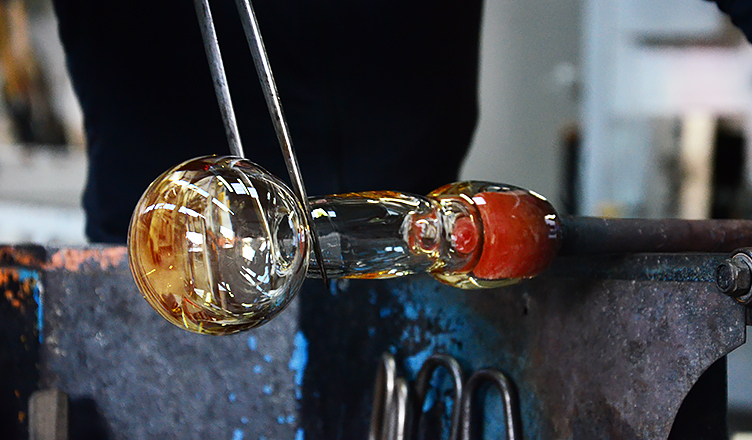

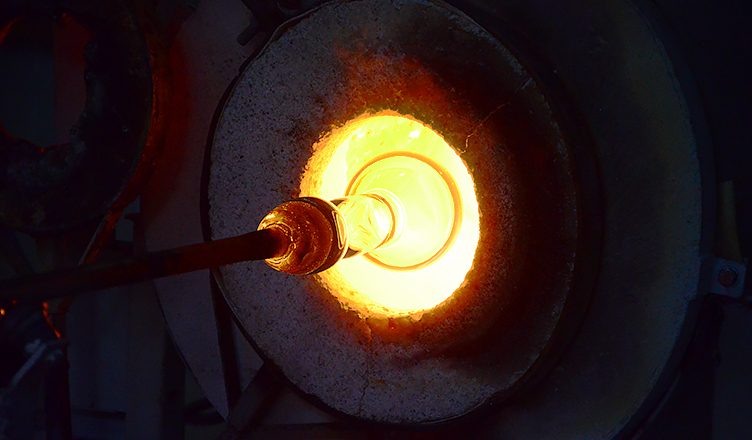

The use case of glassblower’s craft

In the case of glassblowing, the key challenge is the development of a system that can at first track the human body, recognize the gestures that the person is executing and, in the end, compare them with the expert’s gestures and provide sound feedback. The sonification gives a motivation to the user to complete all his tasks/gestures by also reaching to a musical goal. Following the tempo of the sounds is also a helpful feedback on how well the gestures have been performed and in which way they need to be improved.

The researchers of Armines are currently applying movement sonification for vocational training as the most compatible method, taking into consideration the particularities of the craftsmanship. The motion capture of experts and their gestural skills related to glassblowing use case is getting implemented in collaboration of Armines and Cerfav, the National Innovation Center for Glass in France.

For this purpose, recording sessions of the gestural know-how of the glassblower are being organised within Cerfav with the use of high precision motion capture technologies. More precisely, this is done with a special suit equipped with sensors that the expert glassblower wears throughout the sessions of recordings. The data recorded are categorised, so it is specified which gestures are performed, where one gesture stops and another starts, and what are the tools used. This is what we call the “gesture vocabulary”.

The next step is to learn the gestures of a glassblower through gesture recognition and sonic feedback, where a user will try to reproduce the gestures of the professional glassblower. Whenever there is a deviation between the craftsman’s gesture and the apprentice’s gesture, a sound feedback is provided based on the pitch fluctuation of a predefined sound. In this way, the apprentice perceives the quality of the sound and knows if they performed a gesture in the same way as the expert, and if they have to repeat and correct the gesture.

In the video, the installation user is asked to perform one by one the gestures that the routine of the glassblower consists of. The sonification gives motivation to the user to complete all his tasks/gestures by also reaching a musical goal.

First, the user is invited to observe the expert gestures, to hear the correct sonification result of his movements. This is what we call here “the original sounds”. A mapping has been done between motion parameters and acoustic features: The tempo modality is affected by the movement of the right and left hand in the x-axis, while the panning of the sound is affected by the movement on the y-axis.

The gestures of the installation user are being recognized and also sonified. The quality of the sonification – in terms of how close the tempo or the pitch is to the original sounds – is the result of how well the gestures have been performed and recognized. The user is able to perform the gestures one by one, until he reaches the final gesture, thus the creation of the glass carafe. The sounds mapped to each one of the gestures are layered creating a complete music piece at the end of the gestural performance.

The learning process as an enjoyable user experience

Within this learning process developed in Mingei, one apprentice acquires the necessary skillset to perform and reproduce glassblower’s gestures and gradually improve their performance and technical prowess. Furthermore, the process itself is a positive enjoyable experience that brings pleasure and motivation as well as meaning and purpose for learning this craft.

Learning by receiving auditory feedback offers incentives for developing a natural and a non-intrusive experience, that informs the user in real time about their performance and gives them the stimulus to go ahead. Considering also that the end use for Mingei is setting up an installation within a museum, it is desirable that the experience of the visitor has a twist of entertainment and cultural engagement.

Encouraging the new uses of sound can prove to be an excellent, emergent method of embodied learning through learning by doing.

Written by Ioanna Thanou (Armines)

References

Kramer, Gregory. 1993. Auditory Display: Sonification, Audification and Auditory interfaces, chapter Some Organizing Principles For Representing Data With Sound, 185–221. Santa Fe Institute Studies in the Sciences of Complexity. Addison-Wesley.

Hermann, Thomas, Andy Hunt, John G. Neuhoff (Eds.). 2011. The Sonification Handbook. Logos Verlag, Berlin, Germany.

Bevilacqua, Frédéric, Eric. O. Boyer, Jules Françoise, Olivier Houix, Patrick Susini, Agnès Roby-Brami, and Sylvain Hanneton. 2016. “Sensori-motor learning with movement sonification: Perspectives from recent interdisciplinary studies.” Frontiers in Neuroscience, vol. 10, p. 385.

Parseihian, Gaëtan, Charles Gondre, Mitsuko Aramaki, Sølvi Ystad and Richard Kronland-Martinet. 2016. “Comparison and evaluation of sonification strategies for guidance tasks.” IEEE Transactions on Multimedia, 18(4), 674-686.